Table of Contents

The Magic Lantern Glossary

This glossary should provide fast access to ML related terms and explain them in ML context for ML users.

General rule: It should give short answers and may contain oversimplifications (to the extent of being technically wrong). If the general idea is received: Mission accomplished!

Feel free to add your input!

Preferred link formats:

→ Wikipedia: DIGIC

→ ML Forum: Writing modules tutorial #1: Hello, World!

→ DPReview: Rolling shutter explained with simple side-by-side examples

[[wp>DIGIC|Wikipedia: DIGIC]] [[ml>forum/index.php?topic=19232.0|ML Forum: Writing modules tutorial #1: Hello, World!]] [[https://www.dpreview.com/videos/3277074851/rolling-shutter-explained-with-simple-side-by-side-examples|DPReview: Rolling shutter explained with simple side-by-side examples]]

0...9

1x1

1x3

3x1

3x3

5x3

A

ADTG

The ADTG is expected to be the part responsible for converting CMOS analog sensel values to a digital data stream and control its clock and address lines. It also seems to be an accurate timing generator for this purpose.

→ https://magiclantern.fandom.com/wiki/ADTG

→ CMOS/ADTG/Digic register investigation on ISO

Aliasing

Anamorphic

The term “anamorphic”, when used in a Magic Lantern context, often refers to the 1×3 pixel binning setting applied during the image readout, which stretches pixels in a way that very much resembles the use of an anamorphic lens. No special lens is however required, but footage needs correction (de-squeeze) in post in order to look normal again.

Since we are working with spherical lenses, the anamorphic optical characteristic artifacts - such as lens flare or cylindrical perspective - will not apply here. As such, some users prefer to name their presets strictly using the term “1×3 binning”, to avoid confusion with anamorphic optics.

Why film like this? To some users, 1×3 binning is seen as the perfect compromise, making it possible to achieve an almost aliasing-free image on cameras that normally use 3×3 pixel binning with line skipping for 1080p video (that is, on cameras plagued by aliasing, moiré and false detail issues).

1×3 binning requires a lower data rate, compared to the so-called 1:1 crop mode, allowing for greater resolutions. Early tests using 1×3 binning footage were done by oversampling the footage vertically, mainly applied on Dual ISO movie recordings. Oversampling, i.e resolution 1736×2214 → 1736×738, was a good way to get rid of most Dual ISO interpolation artifacts. Nowadays, 1×3 footage will normally be upscaled during post-processing, i.e resolution 1736×2214 → 5208×2214, although the image quality will not match a full-resolution 1:1 readout. That is, it won't match a native 5K (full resolution) image, but it will be much better than a native 1080p recording from the same camera.

→ Pixel Binning

→ Simulation: 1x3 "column binning" vs 3x1 "line skipping" vs 3x3 binning/skipping

Aperture flicker

Unwanted effect in time-lapse recordings caused by small variations in aperture actuation from selected aperture number.

Every time a pic is taken, aperture blades will move from “open” to selected aperture and back. Consumer lenses with electronically controlled aperture blades are generally not able to repeat aperture actuation with infinite precision. Thus small but noticeable variations in exposure may result in displeasing flicker.

Workarounds to avoid aperture flicker:

- Use manual lenses

- Use electronic lenses wide open only. (No aperture blades moving → no aperture variation)

- Use electronic lenses but twist lens in mount while DOF preview is activated. Aperture blades will be locked in position → no aperture variation. Take care to avoid lens getting completely unlocked and falling off the camera.

ARM (architecture)

Dev lingo. The Digic chip inside your camera contains a microprocessor based on the ARM architecture. This microprocessor runs a piece of software developed by Canon and called Firmware, which controls a large part of camera functionality. We consider this processor to be the most important one (main CPU), and that's where Magic Lantern code actually runs, alongside Canon's own firmware.

Canon cameras contain several other secondary processors, but we don't reprogram them (at least, not for the time being). Some of these secondary processors are also based on the ARM architecture, but many of them are not.

ARM Limited doesn't sell hardware, but develops and sells/licenses processor designs to other companies. This concept is quite successful and several embedded systems, including consumer electronics, are equipped with an ARM processor.

Knowledge of ARM architecture is essential for reverse engineering in the ML project.

→ DiGiC

→ Wikipedia: ARM architecture

→ HACKING.rst: Initial Firmware Analysis (covers technical details about the ARM processors used in Canon cameras)

Auto ETTR

B

Bayer

Binning

Bitbucket

Dev lingo: ML's former hoster for Mercurial (a tool for collaborative software development). Bitbucket no longer supports Mercurial. Current hoster is Heptapod.

Blindly Maintained

A camera running Magic Lantern but without maintainer is called blindly maintained. This may happen after ML was ported to the camera some time ago and former maintainer is no longer working on it.

Such camera models will get updates when new builds are published, but there may be nobody feeling responsible to do basic testing and quality control. Bugs may get introduced and remain unnoticed until a user stumbles across it and fills in a bug report.

To get around this limitation, we are trying to implement automated testing in QEMU.

Bootable Card

A bootable card is an essential part of ML's startup process. During installation, some information is written into a hidden section of your installation medium (the first sector of the memory card, aka “boot sector”).

If a card is formatted outside the camera, or formatted in a camera not actively running ML, this information will get lost. Boot ability will not be affected by deleting files or directories.

To make additional cards bootable, it is recommended to redo ML installation for each card.

Bootflag

Some information written and stored in cam during ML installation. It will only be deleted by proper deinstallation.

A cam with bootflag set will check if there is a bootable card found in cam's card slot(s). It's an essential part of ML's startup process.

Branch

Developer lingo.

In collaborative software development you don't change a running system (= build). You first create a copy of the build you want to change and that's called a “branch”. There you rewrite code and test your changes. After successful testing you want to “merge” the changes of your branch into the build. This requires several steps:

1. A code review initiated by a “pull request”

2. A “commit” after successful code review → Code will be merged.

3. (Optional) You may delete the branch now (or keep it for further/long time developments).

Bricking

Build

C

Canon Basic Scripting

Canon introduced Basic Scripting support in EOS camera line-up with Digic 8. It is available for all EOS cams hosting DiGiC 8 and X processors. The same functionality is a long-standing part in PowerShot cameras (there for older DiGiC generations, too).

It makes a dev's life a lot easier by allowing to set cam's bootflag with some simple and portable lines of code. And other things, too.

If you ever come across a statement like “Canon making ML development harder by introducing locked down cameras”: This is proof of the very opposite!

CHDK

Our friends and frequent contributors from Canon Hacking Development Kit (CHDK) enhance PowerShot digital cameras with some features not implemented by Canon. Similiar to - in some aspects - what ML project does with EOS.

There are some major diffences how PowerShot and EOS have to be programmed and that is why there are 2 different project teams.

It may confuse some people that there are a few EOS M cameras not handled by ML but CHDK. And there are 6 PowerShot cameras not located in CHDK realm but ML. Those cameras run code contrary to their names!

EOS M3, M5, M6, M10, M100 work with PowerShot firmware and are therefore handled by CHDK.

PowerShot SX70, SX740, G5 X Mark II, G7 X Mark III, V10, Zoom work with EOS firmware and can be ported to ML.

Link to CHDK project home page

Clean HDMI

In ML context “Clean HDMI” means: No visible overlays. No blinking red recording dot, no focus box.

In does not guarantee you get: No black borders left and right, content in 16:9, seamless streaming for longer than 29:59, flawless AF, …

ML can enforce clean HDMI in all AF modes: Display tab/screen → Clear overlays

Collaboration (software development)

Despite popular Hollywood movie trope, a “normal” programmer doesn't work alone and saves the world by rewriting some green hieroglyphs in a live system twice a day.

The normal thing in software development is teamwork. And when several persons are changing a complex/long piece of software at the same time it becomes quite difficult to maintain oversight and keeping quality up.

There are tools and procedures available to establish an environment where people can change code without overwriting or deleting other peoples changes. Examples: Git, Mercurial → List_of_version_control_software#Distributed_model

Different hosts may offer support for a single or several collaborative software.

The majority of Open Source software development uses Git. ML project uses Mercurial (in the past hosted by Bitbucket, today by Heptapod.)

Commit

Developer lingo and a part of collaborative software development.

Also called “changeset” in Mercurial.

A change (modification) of the source code, to one or more files, stored in a version control repository. Each commit has a description attached, a unique hash for identification, and some metadata (author, date/time and so on).

Ideally, commits should be atomic (they should contain work on one feature only). This provides several advantages, making the development process much easier to follow.

→ https://book.mercurial-scm.org/read/tour-basic.html

→ Wikipedia: Commit (version control)

→ Pauline Vos: Atomic commits

→ Fagner Brack: One commit, one change (atomic commits)

→ Repository

Compression

Compression is the method to minimize data space required. For example: A string like “Abba Abba Abba Abba ” (= 20 characters) can be “compressed” into the info “4*Abba ” (= 7 characters). Of course in real life computer compression methods work completely different!

There are two different kinds of compression:

Lossless: Data output after compression/decompression is exactly the same as data input. No information is lost! The process is reversible each and every time!

This kind of compression is mandatory for data backup (for example). This method is used for Canon's CR2 data format (see LJ92). You may be aware that CR2 files from your camera differ in size, but all CR2 contain all data for the same number of pixels (for a given camera, of course).

Lossy: During compression, a decision is made regarding which data will be stored or not. For example: During JPEG compression, a computer algorithm determines which parts of the picture are most likely to be ignored by human perception. Those parts don't make it into final JPEG. This may be a small or a large part, depending on scene. Consequently, if you redo lossy compression with a single JPEG very often, you will finally see degradation! But for a single conversion, it may be really hard to spot the difference.

BTW: All your DVD and BluRay media are compressed. You may not even have noticed. It works because the human perception is easily tricked into ignoring specific details, esp. in video.

ML/Canon camera specific part:

→ LJ92

Crop

Two possible meanings

→ Pixel binning (1:1 crop mode)

→ Crop factor (35mm equivalent)

Crop Factor

→ Wikipedia: Crop factor

→ Cameraville: 35mm Equivalent Focal Length Comparison Visualizations & Examples for Crop Sensors

crop_rec

A ML module that disables the pixel binning process in LiveView (and also when recording video, of course), resulting in the image being captured from a center crop of the camera sensor (also called 1:1 crop). Normally, 1080p video recording in Canon cameras is done by reading out the entire sensor area, using a 3×3 pixel binning (with or without line skipping, depending on camera model).

Announced on April 1st, 2016.

→ ML Forum: Crop mode recording (crop_rec.mo) (1:1, RAW/H.264, 25/30/50/60 fps)

crop_rec_4k

An April 1st joke where we claim to be able to record 4K video on Canon EOS cameras (something we thought to be impossible). Turns out, we actually can, with some limitations

Actually it's a more advanced (more complicated?) version of crop_rec, which allows changing raw video resolution, frame rate and pixel binning factors.

Announced on April 1st, 2017, further developed by the community since 2018-2019.

→ Petapixel: Magic Lantern Adds 4K RAW to Canon 5D Mark III, Announces It on April 1st

→ ML Forum: crop_rec and derived builds

Cursed cam

A term used by ML devs in our Discord server.

It may refer to a cam troubled by limited resources as

- Exceptional low display resolution (320×240: 1100D, 4000D)

- Sparce internal memory (1100D, …)

- Common cam features missing (4000D, …)

- …

Supporting such cams without dropping ML features may cause troubles in development.

Custom Build

An ML version developed by a dev on his own system/repository.

They don't participate in the review process for nightly builds.

D

Dev

Developer

A developer (dev) in ML microcosmos is a person doing ML programming, porting, maintaining.

DiGiC

Digital Imaging Integrated Circuit

Canon's designation for camera's main processing unit. There are several generations mostly based on ARM architecture.

At the moment Magic Lantern supports EOS cameras hosting Digic 4 and Digic 5 but not all of them.

Canon's current EOS lineup features mostly Digic 8 and X (X = 10 in roman numeral). Some cameras with Digic 6 and 7 are listed, too.

Digic 4 is still in use for entry-level DSLRs like 2000D/Rebel T7 and 4000D/Rebel T100.

Dual-ISO

Dual-ISO uses an alternating line scanning technique to increase dynamic range. Each alternating dual line is scanned at different ISO values and later combined to produce an image (or video) with an increase in dynamic range similar to taking two images at the different ISO values.

As a single imaging technique there are no motion artifacts, however a loss of resolution in the highlights and shadows, and aliasing are the drawbacks.

→Dynamic range improvement for some Canon dSLRs by alternating ISO during sensor readout

→Dual ISO - massive dynamic range improvement (dual_iso.mo)

Duplicate Question (Forum section)

Maybe you will be surprised to find your forum post here because you created it elsewhere. What happened? An administrator or moderator judged your very question/request to be redundant because the same question was asked and answered before or may be even an F.A.Q. item. Most of the time no other indication about what you did wrong will be given but this magical replacement.

Instead of going ballistic about it you should thoroughly check F.A.Q. and/or use forum search (or external search engine) to look after an answer. Only if you are absolutely sure you have been mistreated you may want to post a reply to explain your trouble giving more details.

And don't reply to other peoples Duplicate Question! Just don't!

Dynamic Range

The numerical value describing the value between the largest and smallest value that can be assumed. In photography, this value describes the differences in stops between the brightest (not overexposed) pixels in the captured image and the darkest pixels that the camera can capture in the same image.

E

Embedded System

Generally speaking some kind of computer designed to run specific tasks on a specific hardware. Their software is not made to install and run additional user software. Software running in embedded systems is generally called firmware and is not interchangeable. Processors used in embedded devices mostly have nothing to do with general purpose processors running in your PC.

You will find embedded systems in all kind of electronic devices: GPS, AVR, kitchen appliances, cars, USB-sticks, … and of course cameras.

The number of embedded systems existing outnumbers PCs of any kind by far!

See → firmware

EOScard

A Windows utility programmed by user Pelican. Used to make a card bootable or prepare a card for Canon Basic Scripting.

It was in wide-spread use by Canon community some time ago because back then it was the only option to prepare SDXC cards for ML. Modern ML installation method handles those cards, too.

EOScard is still used for Canon Basic Scripting and sometimes to prepare cards for analyzing bricked cams.

Related app for macOS/osX is MacBoot.

Linux users may use make_bootable.sh.

ETTR

→ Exposing-to-the-right (ETTR) is a method of increasing the Signal to Noise ratio (SNR) in all areas of a picture and for capturing greater tonality in a RAW file. The greatest benefit will be noticed in the shadow areas of the image, but ETTR will also increase the SNR in the entire image due to the effects of shot noise.

Manually it may be achieved by exposing images so that the highlights are pushed as far to the right of the histogram as possible, but, critically, short of saturation. The ML RAW histogram, the ML RAW spotmeter and ML RAW zebras are thus great tools to help with manually setting an ETTR exposure.

For 'pure' ETTR use, set mid and highlight SNR values to OFF, ie zero, and adjust the highlight ignore as required, noting that each scene may require the auto-ETTR settings to be tweaked, eg a flat landscape scene vs a high dynamic range cathedral scene, or accounting for speculars.

The ML ETTR menu has additional/advanced attributes that can be explored, eg links to Canon shutter etc.

Of course, any ETTR based capture will require post processing.

Auto-ETTR works from M mode, and can be triggered from liveview or from outside of LV.

→ ML Forum: Auto ETTR - What all those settings mean and how to use them

Event Procedure

A function in the Canon firmware that can be called by string value. They can be executed with Canon Basic scripts, through PTP, or through the call function in C. Internally, these are known as evprocs.

Experimental Builds

Exposure

The amount of light that reaches the sensor in a single shutter cycle.

F

Feature Request

Firmware

More or less the same as your computer's OS (Windows, macOS, Linux, …) made for an "embedded system". Generally speaking it is not designed to be a platform for additional software installed by a user but instead designed to run on specific hardware for a specific purpose. You will find firmware inside many devices you never thought about. Of course your camera runs a firmware provided by Canon. But your digital medical thermometer has its own firmware and your USB-Stick, too. Canon's firmware allows to run additional programs along with all the tasks Canon designed. This is the way ML runs and it is therefore not called firmware but a firmware add-on. See also Firmware update/upgrade/downgrade

Firmware Add-On

Software running on an embedded system without replacing the original firmware.

Firmware update/upgrade/downgrade

This entry is about updating/downgrading Canon firmware for your camera. It is not about changing Magic Lantern files and directories.

According to Canon there is only one way to go for firmware files: Up! Their documentation says it is not possible to go to an older version. But in fact this only applies to the very first firmware version of a particular camera after release. These first versions are not available as downloadable files. Means: All available firmware files for your camera can be installed.

Links to proper firmware files for your ML nightly build can be found in the installation instructions for your camera: → Download Nightly Builds → Installation or → Useful Links.

Those files are valid for experimental and custom builds, too. Make sure to get matching build and ML versions!

Downgrading to earlier firmware versions may be an essential step to make Magic Lantern run on a camera because an ML build version must match with a correspondent firmware version. In order to prevent harm from your equipment this restriction is mandatory!

How-to:

Follow Canon's instructions (coming with firmware file) to install firmware and ignore the part where it says it cannot be done.

If you are unable to downgrade check your camera and firmware version:

5D Mark III: Firmware version 1.3.x up to 1.3.5 can be downgraded by using EOS Utility. It is not possible to use firmware update menu in cam without tweaking. See How to do a Canon firmware downgrade.

Firmware version 1.3.6 can only be downgraded by a tricky procedure found by user Apollo7 and fine-tuned by a1ex: → Install procedure for 5D3 1.2.3 → Method B

“Method B” is valid for any EOS camera denying firmware downgrades!

PS: If you forget to check firmware and ML version … nothing bad will happen. ML installation checks firmware version at the beginning and (in case of mismatch) just terminates with an error message. No harm will be done!

Fixed-pattern noise

Focus Pixels

FPN

FPS

Frames per second. Number of frames (images) captured per second, while recording a video.

Usual values (PAL):

- 25

- 50

- 100

Usual values (NTSC):

- 23.976 (24*1000/1001)

- 29.970 (30*1000/1001)

- 59.940 (60*1000/1001)

- 119.880 (120*1000/1001)

The time allocated for each video frame - in seconds - would be 1/FPS. For example, when recording at 25 FPS, that means a video frame is captured every 40 milliseconds (1/25 = 0.04 seconds).

FPS override

ML feature to change the frame rate used for video recording, or for LiveView.

It also affects and/or displays rolling shutter timings.

FRSP

Full Resolution Silent Pic → Silent Pic

Full Resolution Silent Pic

G

Git

Dev lingo: A method/tool for → collaborative software development.

Global Shutter

A sensor's ability to grab all pixels at the same time. Canon (and most other consumer cameras) doesn't have it! See Rolling Shutter

H

H.264

Canon's way of storing video streams to card (MOV files). May also be called “native” recording.

When talking about H.264 here we are referring to Canon's implementation of the broader H.264 definition set and what kind of data manipulation happens on the way from sensor to final MOV file.

Because said implementation is done in hardware ML ability to change anything is limited. See hardwired.

Hardwired

Dev lingo:

Some functions in Canon cameras are run by dedicated hardware. Dedicated hardware may have a single task which can be performed very fast. There may be only limited ways (or none) to manipulate the inner workings of said hardware.

Such functions are called *hardwired*.

Example: H.264 video encoding. It works just with given specs and cannot be changed by ML devs.

HDR video

High Dynamic Range: A method to enhance cam's limited dynamic. Video in general uses the same technique as in HDR photo: The camera shoots several frames with different exposure settings. During postprocessing all frames belonging together are merged into a single frame.

Consequently frame rate of merged HDR frames is = Frame rate of camera / number of shots with different exposure.

In HDR video exposure duration (“shutter speed”) and aperture cannot be changed like in HDR photo. The only remaining option to manipulate exposure is ISO.

Things to consider:

Because ML supported cams have quite a limited highest frame rate the number of frames to merge is kept to a minimum and that is 2. Thus ML HDR videos output has half the frame rate used by cam during recording.

There is software able to restore (as good as possible) original frame rate. For example: Twixtor (Payware!).

As in HDR photo mode scenes with fast movements (= different frame contents) are problematic because merging such frames is prone to generate disturbing artefacts.

Do not confuse HDR video mode with Dual-ISO video! Both serve the same purpose (enhancing dynamic range) but are using different techniques.

HDR video uses different exposures in consecutive frames. Resulting frame rate is half of cam's frame rate. Not well suited for fast moving scenes.

Dual-ISO does not alter frame rate because each single frame contains 2 different ISO settings in alternating pixel lines. Vertical resolution is affected, esp. in high- and low-exposed areas.

Heptapod

Dev lingo: ML's hoster for Mercurial (a tool for collaborative software development).

Histogram

The histogram provides a graphical representation of the exposure of the entire image.

Magic Lantern has raw based exposure feedback.

HTP

Highlight Tone Priority (option in Canon menu).

It works by capturing the image at the next lower ISO (for example, ISO 200 with HTP would give the same raw image as regular ISO 100). This gives 1 stop of additional headroom for capturing highlights, at the expense of about 1 stop of additional noise in midtones and shadows.

When shooting RAW (CR2 or MLV), there is no point in using this option.

The main point as far as HTP is concerned is that the sensor has a fixed amount of light that it can capture. Remember, this is a mechanical limitation. HTP will not, can not, and does not change this fact (period!).

So, you're probably asking yourself, how the hell do I seem to gather more highlight detail when I have HTP activated. Simple, your camera meter is lying to you.

→ ML Forum: HTP (highlight tone priority) and DUAL_ISO? OK? No-no?

I

ISO

System used to describe the relationship between exposure and output image lightness in digital cameras. In a digital camera the sensor has a fixed sensitivity, or more accurately, a defined quantum efficiency.

ISO is thus a gain applied to the voltage output from the sensor1).

ISO is a photographic sensitivity setting. On Canon camera especially, ISO has a demonstrated effect on image noise2).

→ Wikipedia: Film speed - 12232:2019 standard

→ ISO standard 12232:2019

→ Roger Clark: What is ISO on a digital camera?

→ CMOS/ADTG/Digic register investigation on ISO

→ Emil Martinec: Noise, Dynamic Range and Bit Depth in Digital SLRs

→ HTP

J

JTAG

JPCORE

Dedicated hardware used by Canon to implement various types of image compression (and decompression):

These operations would be way too slow if they were to run on the general-purpose ARM processor. As such, in order to make these operations fast enough for real-time video encoding, Canon implemented them on dedicated hardware, optimized for this particular task.

JPCORE is believed to be processor-based, possibly some kind of DSP. For practical purposes, these operations can be considered hardwired, as we have little to no idea on how to reprogram the code running on JPCORE.

K

L

Line Scanning

A technique of scanning a horizontal line of photosites.

Dual ISO alternates between scanning two horizontal lines of photosites to ensure the RGGB bayer is captured correctly.

Line Skipping

A variation of Pixel Binning, which only combines the pixels from one single line, and discards the data from the other lines in a 3×3 or 5×3 block.

Advantages (compared to “pure” pixel binning):

- Easier to implement with analog electronics

- Easier to drive the image sensor at higher frequencies? (easier to achieve a higher pixel clock? just a guess)

Disadvantages (compared to “pure” pixel binning):

- Poor performance on high-frequency details (severe aliasing/moiré issues on a resolution chart);

- Only 1/3 of the sensor pixels (for 3×3 binning with line skipping, i.e. 1080p), or 1/5 (for 5×3 binning with line skipping, i.e. 720p), will contribute to the final image.

Neutral (compared to “pure” pixel binning):

- Same data rate (assuming identical resolution and frame rate).

→ Pixel Binning

→ Simulation: 1x3 "column binning" vs 3x1 "line skipping" vs 3x3 binning/skipping

Live View

Live view is the digital display on a camera that displays instantly what the camera is 'seeing'.

→ What is live view? How to make the most of this feature on your DSLR

LJ92

Lossless JPEG '92 - Canon's compression method used in CR2.

Magic Lantern reuses the same method to achieve raw video recording with lossless compression (currently implemented in crop_rec_4k and derived experimental builds). Canon's LJ92 implementation is fast enough for real-time video compression, as it's implemented on dedicated hardware codenamed JPCORE.

→ compression

→ https://thndl.com/how-dng-compresses-raw-data-with-lossless-jpeg92.html

→ ITU Recommendation T.81 (Annex H - Lossless mode of operation)

→ cr2_lossless.pdf (CR2 lossless poster)

→ cr2_poster.pdf (CR2 file format)

→ Canon Raw v3 (CR3 file format -

Lossless Compression

Lossy Compression

LUA Scripting

LUA Scripting is the easiest approach to add own automation tasks to ML.

Examples are:

-Script for shooting a whole set of total eclipse photos with all critical phases an astronomer has to get.

-Script for focus stacking for landscape, architecture with real-time calculator and user interface.

Main difference to programming ML (autoexec.bin) and modules: A scripting language doesn't need to be compiled. It runs line by line (simplified) and contains readable text. And the only thing needed to make such a file is a text editor.

Lua_fix

Short for “lua_fix build”. An experimental build which (as the name implies) contains some fixes/enhancements for ML's LUA Scripting.

For streaming/vlogging it allows for uninterrupted USB/HDMI connections. 29:59 limit does not apply! To enable this feature access ML menu by pressing cam's trashcan button and go to Prefs tab/screen → PowerSave in LiveView. Change setting for “30-minute timer” to Disabled.

Installation instructions for lua_fix build are the same as for nightly builds. Just lookup Nightly Builds download page and look for your cam's installation procedure.

Download from https://builds.magiclantern.fm/experiments.html → Latest Lua updates + fixes

M

Main Build

Maintainer

A maintainer in ML microcosmos is a developer feeling responsible for a particular camera model. Maintaining a cam consists of tasks like programming, testing, quality control, porting ML to new firmware versions.

Cameras without a maintainer are called blindly supported.

Memory patches

Mercurial

Dev lingo: A method/tool for → collaborative software development. Used by ML.

ML Framing

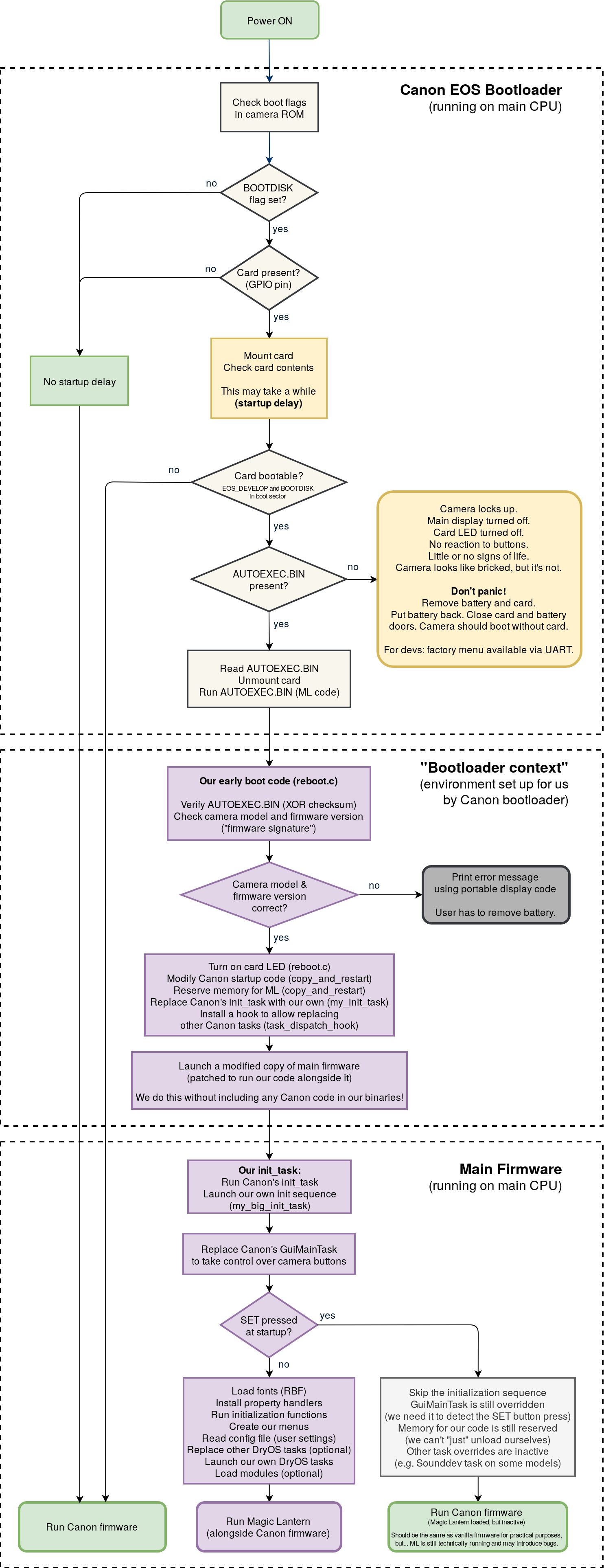

ML Startup Process

ML needs 3 things to start on a supported cam:

- Camera with bootflag set

- Card containing Magic Lantern files and directories

Startup order (simplified)

1) During Power Up, the bootflag will force the camera to check whether the card is bootable.

→ 2a) If card is not bootable, camera will startup with plain Canon firmware. → ✔

→ 2b) If a bootable card is detected, camera will try to locate ML's “autoexec.bin” file.

→ → 3a) If autoexec.bin is not found, camera will get stalled and remain in this state until battery is removed → ✘

→ → 3b) If autoexec.bin is found, camera will load autoexec.bin and starts up with Canon firmware + ML features → ✔

Detailed startup process on the flowchart →

Bypassing ML startup

By pressing SET during power up, camera will start with plain Canon firmware.

MLV

Magic Lantern Video format.

ML's way to store raw data streams to storage cards. Structured to hold essential recording info like shutter speed, aperture, time code, …

MLV requires postprocessing and conversion because it is not widely supported. Adobe and Blackmagic applications (and others) know nothing about MLV files.

MLV is successor of outdated format recorded by RAW_REC.mo.

There are two varieties:

MLV 2.0

Provided by module mlv_rec.mo

Full feature set for best flexibility, reliability and recovery/repair.

Supports audio recording by module mlv_snd.mo

MLV lite

Provided by module mlv_lite.mo

Current version is 1.1

As name implies it is derived from “full” MLV. It sacrifices some features for performance reasons.

Module

Some parts of ML's feature set are not loaded by default. To use them you have to access Modules tab/screen activate them* and restart camera. After powerup module's menu options are visible in ML's tabs/screens.

Some developers created custom modules not included in zipped ML builds. You can add modules by placing module file (*.mo) into card directory ML\Modules.

There are two reasons to place ML features into modules:

- Placing all functions into one single piece of software bloats memory requirements and may exceed camera limits for available memory during startup.

- Modules are in some ways easier to develop. Thus lowering the bar to make features happen in ML.

Well known ML features coded as modules are: RAW/MLV recording, Dual-ISO, Silent Pics, ETTR …

N

Native resolution

Resolution used to read out an image from the camera sensor.

In still photo mode, resolution (WxH) is equal to number of active photosites on the image sensor (as expected).

As a consequence of pixel binning used in LiveView, native resolution in 1080p video mode is equal to full image resolution divided by 3 on both sides. For example:

- 5D Mark II: CR2 resolution 5616×3744 (official), 5634×3753 (dcraw), 1080p raw video resolution 1878×1251 (1878×1056 for 16:9 aspect ratio). H.264 video is interpolated from 1856×1044 to reach 1080p.

- 5D Mark III: CR2 resolution 5760×3840 (official), 5796×3870 (dcraw), 1080p raw video resolution 1932×1290 (in practice, we only use 1920×1280). For some reason, Canon firmware interpolates H.264 (1920×1080) from 1904×1072.

: examples from other cameras.

: detailed proof showing above resolutions.

In the same way, maximum raw video resolution in 720p is equal to full image resolution divided by 3 horizontally and by 5 vertically - that is, a stretched image.

The above applies to all Canon EOS cameras supported by Magic Lantern at the time of writing.

Nightly build

Noise

O

Overclocking

Overexposure

A combination of exposure and ISO that results in parts of the image appearing white (reaching saturation).

P

Pattern Noise

Pattern noise is noise which has a defined pattern. Pattern noise is quite apparent and visually disruptive as our perception is adapted to picking out patterns.

Photosite

Sometimes referred to as a → sensel, a photosite is similar to a Pixel, but as a pixel describes a single element of a light producing device, a photosite refers to a single element in a light sensing device.

Pixel

A pixel is the smallest addressable element in an image or display. One million pixels equal a → Megapixel

Pixel Binning

In Live View and when recording video, the camera software may not process every single photosite from the camera sensor, particularly when the video resolution is much smaller than image sensor resolution (e.g. 1920×1080 vs 5760×3840). Instead it uses a process of pixel binning, where it combines photosites from the sensor in some fashion to derive an output at the required resolution.

PoC

Port

A piece of software adapted to run on a particular hardware platform.

In our case, a Magic Lantern port is a version of Magic Lantern adapted to work on a particular camera model (usually tied to a specific Canon firmware version). For example, “40D ML port” would refer to a version of Magic Lantern adapted to run on the Canon EOS 40D.

→ Wikipedia: Porting (moving software to a different system)

Proof of Concept (PoC)

In ML microcosmos PoC means a specific task/feature has been proven to be feasible.

For example: Running ML on a new camera requires (mandatory!) custom code (like ML) to run along with Canon's firmware. If there is any custom code running on it - no matter if it is as small and insignificant as “Hello, World!” shown on display - Proof of Concept is established.

It does not mean there is a port in progress or anyone is actually working on it! It simply means that a person with lot of spare time and some skills should be able to port ML to this camera.

Pull Request

Developer lingo: If a developer wants to add own changes to ML code he/she has to generate a “pull request”. A pull request will get approval by other devs (or not) after review. Approved pull requests will get merged.

Q

QEMU

QEMU (Quick Emulator) is some kind of virtual machine able to emulate several different computer systems, including those based on x86 and ARM architectures (and several others). We have created a custom version of QEMU, which is able to emulate a camera running Canon firmware on regular (Intel-based) PC computers.

Such an emulator allows developers to test embedded programs in a safe environment, without potential hazard for the camera, and provides debugging and firmware analysis capabilities that are not otherwise available on the physical camera hardware. It is also useful for running automated tests of our software, e.g. for Continuous Integration.

Making a ROM dump work in QEMU is a very early step in porting a camera.

→ README.rst: How can I run Magic Lantern in QEMU?

→ HACKING.rst: EOS firmware in QEMU - development and reverse engineering guide

R

RAW recording

ML feature allowing movie recording as a raw data stream to memory card.

Pro: Higher image quality compared to Canon's H.264 implementation.

Cons:

Very high data rates. Example: H.264 in 1080p25 in IPB: Around 5.5 MByte/s. MLV in 720p25 uncompressed: Around 38 MByte/s.

Requires postprocessing because of ML's own raw video format → MLV

High processor load may contradict use of ML overlays like zebras, focus peak, histogramms, …

Preview in cam will be lagged (Processor load too high).

RAW video

Read Noise

Read noise is the deviation (read: error) between the digital raw output value (read: final image) and the number of captured photons (read: light).

Register

Dev lingo

Resolution Chart

Reverse Engineering

A process of analyzing the camera internals (both hardware and software) in order to understand how it works and how to interface our code with the existing (proprietary) Canon firmware. For example, figuring out what functions Canon firmware can be called from our code, what parameters are expected by these functions and how to use them safely in Magic Lantern, would require reverse engineering.

We have to do this because Canon does not publish the source code of their firmware (their code is proprietary software), and they do not have any publicly documented way for running custom software directly on the camera. To be fair, Canon does offer SDKs for custom application development (EDSDK and CCAPI), but any code developed using these SDKs would require a computer or a smartphone to run. Our code runs directly on the camera.

Techniques:

- analyzing high-res PCB pictures (requires disassembling the camera);

- interacting with the firmware over UART;

- running and analyzing Canon firmware in QEMU;

- disassembling/decompiling the firmware - see ARM architecture;

- capturing detailed logs during during some particular camera operation (such as camera startup, shutdown, changing ISO, capturing an image, entering LiveView and so on).

Caveat - this process may have legal implications:

→ Magic Lantern FAQ - Is it legal?

→ About Magic Lantern - Legality

→ Safe Hacking Toolbox (our internal rules)

→ EFF Reverse Engineering FAQ

→ Cyberlaw Clinic Security Researcher's Guide

→ Wikipedia: Reverse Engineering - Legality

ROM Dumper

A piece of software that runs on your camera and saves the contents of the camera's internal memory (ROM) to some external media (usually SD/CF card, but can also be done over UART, USB or other interfaces - even by encoding the data using plain LED blinks).

Such a ROM dump may enable a developer to run camera's software inside an software emulator on a PC.

The existence of a particular ROM dumper doesn't say anything about porting progress, or whether a developer is actually working on that camera. It usually shows that we are able to execute at least some code on the camera - which is an essential step - but somebody still has to perform Reverse Engineering and write the code to complete the Port.

Rolling Shutter

A method of reading out images from the camera sensor, where image rows are read out one by one, sequentially.

Canon cameras use this method in LiveView (also when recording video, of course).

Tip: in Magic Lantern, the time required to read out an image in LiveView is displayed in the FPS override submenu. On Canon cameras currently supported by ML, image readout time must be smaller than the time allocated for one video frame (1/FPS). On other cameras, it might not be the case.

→ Machine Vision Kamera: Rolling versus Global shutter

→ DPReview: Rolling shutter explained with simple side-by-side examples

→ ML Forum: Rolling shutter measurements from flickering lights

Rolling Shutter with Global Reset

Image readout process where rows on the image sensor start capturing light all at once (as with a global shutter), but they are read out one by one, sequentially.

Canon cameras use this method for still photo capture, relying on the mechanical shutter to block the light while the image is read out from the sensor.

→ 1stVision: What are global shutters and rolling shutters in machine vision cameras?

→ FRSP

S

SD-card overclocking

sd_uhs

A highly experimental module used to increase the read/write speed on SD cards operating in UHS-I mode.

Caveats:

- some cards will work well, while others will fail;

- data integrity is not guaranteed (use/experiment at your own risk!);

- at least one SD card was physically damaged while using this module.

At the time of writing, sd_uhs is compatible with DIGIC 5 models only. Earlier hardware (in particular, DIGIC 4) is believed to be incompatible with this technique ( details). Later models (DIGIC 6 and newer) were not explored yet in this direction.

Semi Bricking

→ Bricking

Sensor

The device in the camera that captures light and converts to an analog voltage.

Sensor Readout

Shot Noise

In simplest terms, shot noise describes the noise present in light itself. In essence, from standpoint strictly related to noise, capturing more light is always better.

See ETTR

Shutter Bug (EOS M)

Symptom: EOS M can focus by half-press shutter, but full-press (photo capture) won't work. Using touchscreen may lockup cam completely and you have to remove battery.

Cause: Most likely a so called “race condition” at the very beginning of cam's startup process. The time required by Canon bootloader to mount the filesystem and load Magic Lantern, is important (if it takes too long, it will trigger the shutter bug). ATM ML devs cannot fix that.

Cure: None, at least in foreseeable future.

Workarounds:

* Twist your lens in socket. Powerup cam. Twist lens into mount socket.

* Use a fast and/or a small card!

Disclaimer: This entry contains no practical joke.

Silent pic

A picture captured without mechanical shutter movements.

Main flavors:

- LiveView frame. Usually limited resolution, but this can be increased with crop_rec (full resolution possible on some models). Long exposures are generally not possible with this technique (unless one records a sequence of images and stacks them). This process uses a rolling shutter readout.

- FRSP aka Full Resolution Silent Picture (using the “normal” still image capture code, but without actuating the mechanical shutter). Short exposures are not possible, as the camera keeps the sensor “open” during the readout process, thinking the mechanical shutter would block the light. Long exposures are OK, with some limitations. This process uses a rolling shutter with global reset readout.

Esoteric flavors:

- Slit-scan

→ ML Forum: 14bit RAW DNG silent pics! (precursor of Raw Video)

→ ML Forum: Full-resolution silent pictures

Simplified Histobar

In Live View, there is a Simplified Histobar which works similar to a histogram.

Magic Lantern has raw based exposure feedback.

Skipping

Spotmeter

The spotmeter is a tool used to meter a particular area of the scene. For instance, you may want to meter a particular area of your scene, as a highlight, a midtone, or a shadow.

Magic Lantern has raw based exposure feedback.

Stable Build

Stub

T

U

UART

A serial protocol interface/connector inside your cam. ML devs suppose it was implemented by Canon for diagnostics and for low-level maintainance/repair.

By accessing it ML devs can read a lot of internal low-level data hidden from Canon menu. And in case of very-hard-to-hack cameras there may be a way to write data, too (for example: Enable bootflag).

Physically accessing UART in older cams required opening cam and maybe even soldering wires to camera's cirtuit board. Later cams have a UART connector on board for easier access.

On some of Canon's Digic 7, 8 and X cameras this UART is accessible without opening the camera by just removing a reusable rubber cover.

USB

Generally used for debugging. Information is transmitted over the Picture Transfer Protocol. Canon has added several commands alongside the standard ones, such as the one that can be used to write the bootflag (See https://github.com/petabyt/mlinstall)

Underexposure

A combination of exposure and ISO that results in the image (or parts thereof - particularly wanted parts of the image) losing shadow detail.

Unified (build)

V

VAF

Video Anti-Aliasing Filter

W

X

Y

YAMLMO

Yet Another ML Menu Option. You may get this response after posting a feature request asking for an additional menu option. ML staff is reluctant to add any new menu options because they feel strongly there already are too many and they want to avoid spreading the disease.

Z

Zebras

Zebras provide a graphical overlay of the areas in the image which are overexposed, and underexposed.

Magic Lantern has raw based exposure feedback.